Mike Izbicki's Research Page

About me

I'm an assistant professor at Claremont McKenna College. My research develops new machine learning algorithms for large scale social media problems. I particularly like developing algorithms with strong theoretic guarantees, and using these guarantees to achieve better real-world performance. The main theoretical tools I use are from high dimensional probability, computational geometry, and numerical optimization. Outside of the machine learning realm, I do a lot of work with Haskell and open source software.

You can contact me at mike@izbicki.me, and you can download my full cv here.

Selected Projects

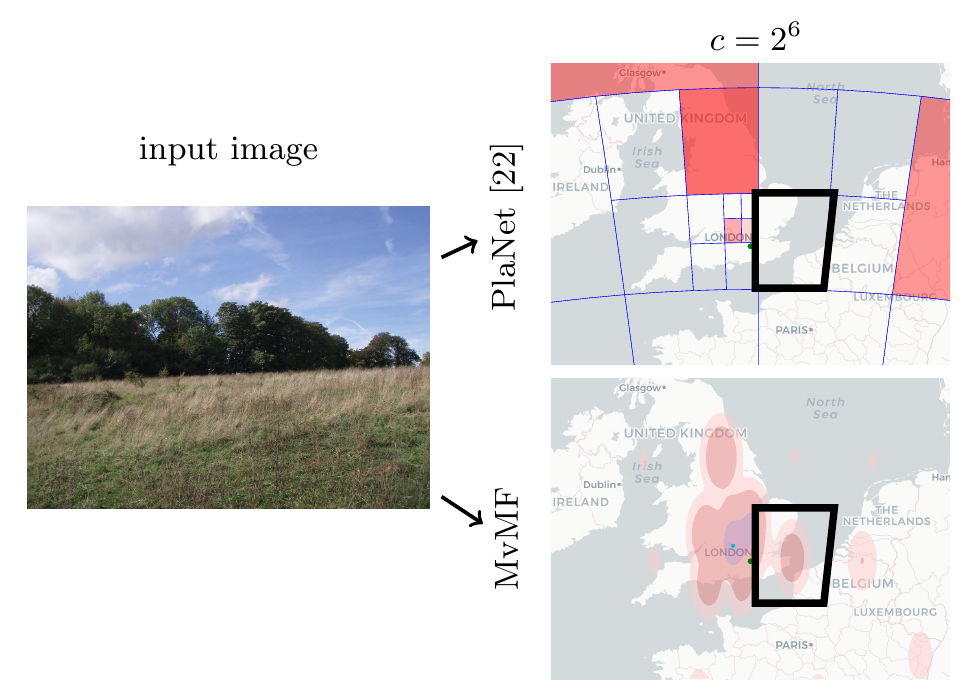

Geolocating Social Media

Social media messages are sent from all over the world, in hundreds of languages.

Unfortunately, most research on social media only studies English-language messages sent from Western countries,

ignoring billions of people.

To understand how these people use social media,

I've developed techniques for understanding the content of images and text written in any language,

and used these techniques to predict which region of the world the content was created in.

These techniques have applications such as understanding how political opinions differ throughout the world and measuring the effects of climate change.

Social media messages are sent from all over the world, in hundreds of languages.

Unfortunately, most research on social media only studies English-language messages sent from Western countries,

ignoring billions of people.

To understand how these people use social media,

I've developed techniques for understanding the content of images and text written in any language,

and used these techniques to predict which region of the world the content was created in.

These techniques have applications such as understanding how political opinions differ throughout the world and measuring the effects of climate change.

Papers:

- Mike Izbicki, Vagelis Papalexakis, and Vassilis Tsotras. Exploiting the Earth's Spherical Geometry to Geolocate Images, ECML-PKDD 2019.

- Mike Izbicki, Vagelis Papalexakis, and Vassilis Tsotras. Geolocating Tweets sent from any location in any language, under review.

Robust stochastic gradient descent

Stochastic gradient descent (SGD) is the main algorithm used to train large scale, modern machine learning algorithms like deep neural networks.

Unfortunately, it can fail when a malicious adversary has corrupted the data.

I showed that a simple technique call gradient clipping makes SGD robust to these attacks both in theory and practice.

Stochastic gradient descent (SGD) is the main algorithm used to train large scale, modern machine learning algorithms like deep neural networks.

Unfortunately, it can fail when a malicious adversary has corrupted the data.

I showed that a simple technique call gradient clipping makes SGD robust to these attacks both in theory and practice.

Papers:

- Mike Izbicki. Gradient clipping makes stochastic gradient descent robust to adversarial noise, under review.

Distributed Learning

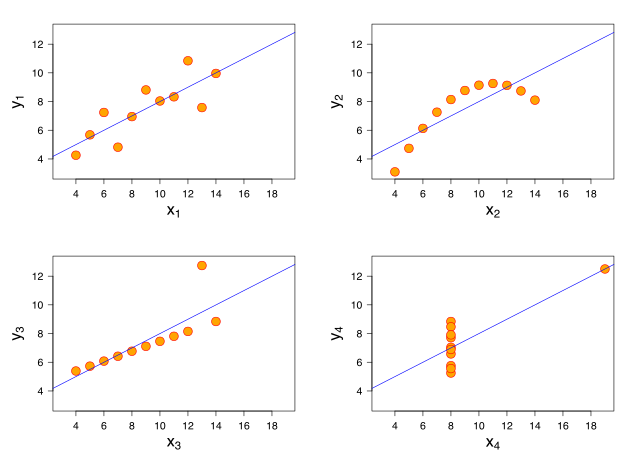

I'm interested in map-reduce style distributed learning algorithms.

These algorithms are easy to implement, fast, and can provide privacy guarantees.

My latest work introduces a new algorithm that improves accuracy without losing speed.

My previous work shows that map-reduce style algorithms come with cross-validation performance estimates essentially for free.

I'm interested in map-reduce style distributed learning algorithms.

These algorithms are easy to implement, fast, and can provide privacy guarantees.

My latest work introduces a new algorithm that improves accuracy without losing speed.

My previous work shows that map-reduce style algorithms come with cross-validation performance estimates essentially for free.

Papers:

- Mike Izbicki, Christian R. Shelton. Communication Efficient Distributed Machine Learning with the Optimal Weighted Average, ECML-PKDD 2019.

- Mike Izbicki. Algebraic Classifiers, International Conference of Machine Learning (ICML), 2013.

My disseration contains an expanded description of this work that's a lot more approachable.

Faster Nearest Neighbor Queries

The cover tree data structure is used to speed up nearest neighbor queries.

I simplified the definition of the cover tree and proposed a number of modifications that improve the data structure's real world performance.

I'm currently working on ways to further improve the theoretical bounds.

The cover tree data structure is used to speed up nearest neighbor queries.

I simplified the definition of the cover tree and proposed a number of modifications that improve the data structure's real world performance.

I'm currently working on ways to further improve the theoretical bounds.

Papers:

- Mike Izbicki, Christian R. Shelton. Faster Cover Trees, International Conference of Machine Learning (ICML), 2015.

- Chapter 4 of my dissertation has a number of additional currently unpublished results.

Protecting the Power Grid

Modern smartgrids can be damaged by malicious loads.

Identifying these malicious loads in large power networks is a difficult challenge.

I helped design a new algorithm for identifying malicious loads that is more scalable than previous algorithms.

The method uses a low rank modification to the unscented kalman filter.

Modern smartgrids can be damaged by malicious loads.

Identifying these malicious loads in large power networks is a difficult challenge.

I helped design a new algorithm for identifying malicious loads that is more scalable than previous algorithms.

The method uses a low rank modification to the unscented kalman filter.

Papers:

- Mike Izbicki, Sajjad Amini, Christian R. Shelton, Hamed Mohsenian-Rad. Identification of destabilizing attacks in power systems, American Controls Conference (ACC), 2017. Source code is available on the github page.

Haskell Projects

I like exploring the ways that functional programming can make writing numerical code (and especially machine learning code) easier.

Some of my open source projects are:

I like exploring the ways that functional programming can make writing numerical code (and especially machine learning code) easier.

Some of my open source projects are:

- HerbiePlugin automatically rewrites mathematical expressions in Haskell code to be numerically stable.

- Subhask is an alternative standard library for Haskell. It provides type safe linear algebra operations, and the interface is considerably more flexible than standard tools like Matlab and NumPy.

- HLearn is a machine learning library for Haskell. Unfortunately, Haskell's existing type system is too limited for some of the features I need, so this project is on temporary hiatus. Once Haskell gets proper dependent types, I hope to resume working on this project. An old version of the project is described in this paper presented at TFP2013, but the library has advanced considerably since then and the paper is now out of date.

I also have a number of projects that explore the limits of the Haskell type system:

- homoiconic automatically constructs FAlgebras that are isomorphic to a type class. The FAlgebras can then be manipulated as ordinary Haskell objects. The end result is the addition of a Lisp-like homoiconicity feature for Haskell.

- ifcxt proposes an extension to Haskell's type system to allow constraints to include if statements. This lets us write faster, more generic code.

- typeparams provides "lenses" at the type level to facilitate working with complex types.

- parsed is a rewrite of the classic Haskell parsec library in bash. See also the associated SIGBOVIK paper Bashing Haskell.

Improving Contributions to Open Source Software

At UC Riverside, I designed and taught a course on open source software construction. The course won 3rd place in the 2015 SIGCSE Student Research Competition. I also presented the course at the ICOPUST conference in the DPRK (North Korea). I helped DPR Korean students create the first open source contributions from their country.

Papers:

- Mike Izbicki, Open Sourcing the Classroom, ICOPUST 2015.

Some open source projects I've helped undergrads start:

- BrightTime is an Android app that adjusts screen brightness to save battery life.

- The git game and git game v2 are terminal based games that teach the player how to use advanced git commands.

- Melody Matcher is a web based game for musicians to train their ears.

- PacVim is a Pacman clone where the user uses vim commands to control the pacman. The purpose is to teach the player advanced vim movement in a fun way.

- regexProgram is a regular expression quiz generator.